Artificial intleligence

Meet the AI brain that can see anything

Covariant CEO Peter Chen tells Giacomo Lee about the AI startup’s work to develop a computer vision AI brain that could transform operations in the factories and warehouses of the future.

Forget humanoid robots or dancing mechanical dogs. If you want a real technological challenge, try making an AI brain which can sort items in a retail warehouse or an automotive factory, or any other industrial setting for that matter.

Covariant has taken on this challenge, with some success. Having raised $147m in funding so far with total valuation undisclosed, the Berkeley-based startup is one of GlobalData’s AI unicorns predicted to go public next year with a valuation of $1bn+, and the only name on that hot list dealing with the burgeoning theme of smart robotics.

That’s all down to the company's signature, off-the-shelf, universal AI for robots, known as the Covariant Brain. CEO Peter Chen believes the product could transform operation in retail and other sectors, while demonstrating the potential of AI-powered robots in disrupting industries and creating the factory of the future.

Even more intriguingly, these robots can ‘see’ transparent objects, which may give pause to those sceptical as to whether a robot can truly replace a human in the industrial workplace.

Deep tech that can ‘see’ anything

“Covariant’s AI-powered robotics solutions in particular are designed to see, learn, and interact with the world around them so they can handle complex and ever-changing operations in a warehouse setting,” Chen says about the AI brain powering Covariant’s robotic arms. “That means they can autonomously handle, whether it’s pick, pack or ship, millions of different types of items that can exist in a modern warehouse.”

The software involved relies on what Chen calls various ‘breakthrough’ AI technologies, primarily in deep reinforcement learning (RL). A type of machine learning, deep RL sees neural networks learning from experience, rather than as usual from historical data. Such networks are ‘deep’ in that they have more layers of neural gates, making them more like the human brain than conventional computing architecture.

“Deep RL is one of the core technologies that we pioneered in academic research, continued to advance as part of research at Covariant and commercialised into the Covariant Brain," says Chen. “We believe deep RL is a critical piece of the puzzle to build capable AI systems for robotics because it fundamentally gives AI the ability to make decisions as opposed to just understanding.”

The final product is a robotic arm that ‘sees’ what it picks, packs and ships from moving lines and shelves. This is through the AI tech of computer vision, where deep RL software meets old-fashioned camera hardware.

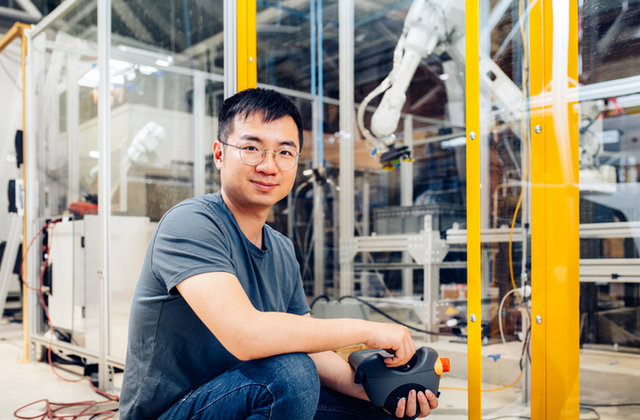

Peter Chen is the CEO and co-founder of Covariant.

This proprietary vision system combines cameras and software to provide perception capabilities to robots. With AI at the core of the system, this allows the Covariant Brain to distinctly ‘see’ a wide variety of objects regardless of the packaging type: transparent, poly-wrapped, overlapping, deformed, partially obscured, reflective and more.

The tech can also see "the orientation of the scene – packed chaotically, objects placed in divided bins, distributed in obscured positions, stacked on top of each other," Chen adds, "so it understands where to grasp”.

No lidar [a method for determining ranges by targeting an object with a laser and measuring the time for the reflected light to return to the receiver] is involved, but Chen finds the comparison between computer vision and lidar to be an interesting one. “While lidar has a lot of successes in the self-driving car industry, we have found its use in AI robotics, in particular in logistics, to be limited. For example, it’s typically challenging for lidar systems to perceive transparent or reflective objects in a robust way."

AI vision systems in a warehouse setting look for patterns to help distinguish objects and determine how to pick them.

As Chen explains, transparent objects present challenges for robots both in knowing where they are and in understanding how to best handle them.

“AI vision systems in a warehouse setting look for patterns to help distinguish objects and determine how to pick them. This becomes challenging when differences are difficult to distinguish or when it’s unclear where to grasp the object. That’s often where other providers fail. Characteristics like size, packaging type, colour and shape all influence how challenging an object is to pick.

“One of our main customers is a third-party logistics firm for primarily health and beauty companies," says Chen. "As you can imagine, products in this area are usually encased in transparent reflective packaging and also small. That’s quite the picking challenge. And that’s one part of our secret sauce: our system’s ability to see and immediately understand how to pick up these items accurately and quickly, even if it’s never seen that specific product before.”

From UC Berkeley to ABB

The deep tech behind Covariant’s product stems back to Chen’s academic research with company co-founders Rocky Duan, Tianhao Zhang and Pieter Abbeel. They met in 2016 while conducting AI research at UC Berkeley’s Artificial Intelligence Lab and at non-profit OpenAI. A year later the researchers decided to take their work into the commercial market with the founding of Embodied Intelligence, later to be renamed as Covariant.

“After coming from Berkeley and OpenAI, I’ve had the experience of being in a purely research-based setting,” says Chen. “At Covariant, which is a bit of both because our team is always conducting R&D in our corporate lab that keeps us at the forefront of AI robotics, I enjoy a combination.

“Making breakthroughs is exciting on its own and then witnessing the value that they can bring to customers starting day one is satisfying as well. I love building intelligent robots, but I also want them to be practical.”

That practicality has seen Covariant grow from university labs into one of the world’s top AI unicorns. Its most recent Series C funding was, according to GlobalData’s deals database, to the tune of $80m, and the company has already established a partnership with robotics giant ABB for an undisclosed amount.

The ABB relationship makes sense as more of the robotics old-guard cotton onto the power of AI in future factories. AI technologies, most notably machine learning such as deep RL, are integral to the development of intelligent industrial robots, which can anticipate and adapt to certain situations based on the interpretation of data derived from an array of sensors.

The AI brain box

But Covariant isn’t simply looking to form cogs in an established industry. With its off-the-shelf AI brain, it hopes to bring smart robotics into any kind of industrial setting without the need for installation or adaptation of the existing software: whether for apparel, healthcare or food operations.

“These deep neural networks are designed to be universal so when they encounter a new task, they don’t need to be re-trained to have strong out-of-the-box performance,” Chen says. “The Covariant Brain is powering a wide range of industrial robots to manage order picking, putwall, sorter induction – all for companies in various industries with drastically different types of products to manipulate.

“(This) demonstrates the Covariant Brain can help robots of different types to manipulate new objects they’ve never seen before in environments where they’ve never operated.”

Clients already include retail logistics giants KNAPP and Invata, as well as electrical supply wholesaler Obeta and the Belgian Post Group.

AI robotics offers a reliable, financially viable solution that helps warehouses alleviate the pains of labour shortage and in general run more efficiently.

What, then, is the Covariant elevator pitch on its relatively high-concept AI brain?

“What really matters is, ‘Are we going to help them run their business better?’,” says Chen. “Our main focus is the logistics sector right now and we start by discussing the business challenges specific to them – most often, labour shortage and supply chain fragility. AI robotics offers a reliable, financially viable solution that helps warehouses alleviate the pains of labour shortage and in general run more efficiently.

“Generally speaking, our customers – and the broader population – still have a fairly nascent understanding of AI, let alone the different types and methodologies. Our clients that are most successful know enough about AI to identify key performance metrics to do a rigorous evaluation of the different vendor options.

“Having said that, at the end of the day, what our customers care about is that our products actually work when deployed in their warehouses and help them increase efficiency.”

From OpenAI to IPO?

This, says Chen, is the reason Covariant continues to see increasing adoption and growth among its customers.

“Autonomous order picking has long been seen as the holy grail for warehouse automation in the robotics world,” he says. “It’s a very hard problem, but thanks to our team’s fundamental advances in research and engineering, we’ve achieved production-grade autonomy for a range of industries over the past year.

“Modern AI is opening up a whole new generation of robotic applications and we’re positioned well to capitalise on the heightened market demand.”

GlobalData is predicting Covariant to go public by 2023. When asked whether this forecast is either overly optimistic or overly cautious, Chen replies: “Haha, I can’t say. We’re focused on taking care of our customers and ramping up to meet the increasing interest from prospects.

“We’re passionate about bringing AI robotics to every sector. We believe there’s an opportunity for Covariant in so many spaces but fitting the technology to the use case is critical. We’re starting with logistics and being very intentional about our progress as we plan our future and where our software can keep learning from prior scenarios.”